How To Recreate A Website A Complete Guide

how to recreate a website can seem like a daunting endeavor, yet it is a powerful process that offers a fresh start and enhanced capabilities for your digital presence. This journey involves meticulous planning and strategic execution, transforming an existing online entity into a revitalized, modern platform.

The process systematically begins with a thorough understanding of the original site’s structure, content, and core functionalities. This foundational analysis then guides the selection of appropriate technologies and design tools, ensuring that both visual aesthetics and operational features are meticulously planned. Subsequently, the development phase focuses on seamless content integration, culminating in rigorous testing and strategic deployment to ensure a robust and high-performing new website.

Understanding the Existing Website Structure and Content: How To Recreate A Website

Before embarking on the recreation of an existing website, a thorough and systematic understanding of its current structure and content is paramount. This foundational analysis ensures that the new rendition accurately reflects the original’s intent, functionality, and user experience, while also providing a clear inventory of all necessary assets. A meticulous approach at this stage can significantly streamline the subsequent development phases, preventing oversights and rework.

A comprehensive analysis of a website’s overall layout, navigation, and user experience (UX) is crucial for replicating its essence and functionality. This involves more than just observing; it requires a structured approach to deconstruct how the site is built and how users interact with it.The following steps Artikel a systematic approach to analyze these critical aspects:

- Layout and Visual Hierarchy: Examine the site’s grid system, spacing, typography, color schemes, and the visual weight given to different elements. Identify recurring patterns in how information is presented, such as header styles, content blocks, and footer design. Pay attention to how the layout adapts across various screen sizes, indicating its responsiveness.

- Navigation Structure: Map out all primary and secondary navigation menus, including dropdowns, mega menus, breadcrumbs, and footer links. Understand the logical flow between pages and identify any search functionalities or sitemap links that aid user orientation. This reveals the site’s information architecture.

- User Experience (UX) Flow: Trace common user journeys through the website. Observe how calls-to-action (CTAs) are positioned and phrased, how forms are designed, and the overall interactivity of elements like carousels, accordions, or interactive maps. Assess the ease with which users can find information, complete tasks, and understand the site’s purpose.

- Interactive Elements and Features: Document any unique interactive features such as calculators, quizzes, live chat widgets, embedded media players, or custom animations. Understanding these elements is key to replicating the dynamic aspects of the original site.

- Content Presentation: Note how different types of content (e.g., articles, product listings, testimonials) are presented. Observe the use of headings, paragraphs, lists, and media integration within content blocks to understand the site’s content strategy.

Systematically Extracting Text Content, Images, and Media Files

Efficiently gathering all existing content is a critical step in the recreation process. This involves employing various methods and tools to systematically extract text, images, and other media assets from the source website, ensuring nothing is overlooked.Here are proven methods for systematically extracting various content types:

- Text Content: For individual pages, manual copy-pasting is effective. For larger sites, browser extensions designed for saving web pages (e.g., “Save Page As” in browsers, or specialized text extraction tools) can capture text along with its formatting. Web scraping tools, when used ethically and within legal boundaries, can automate the extraction of specific text elements across multiple pages.

- Images: Individual images can often be saved by right-clicking and selecting “Save Image As.” For bulk extraction, browser developer tools (specifically the “Network” or “Elements” tab) can reveal image URLs, which can then be downloaded using browser extensions or command-line tools. Dedicated image downloaders or web scrapers can also automate this process, provided the site’s terms of service are respected.

- Media Files (Videos, Audio): Extracting video and audio files often requires identifying their direct source URLs. Browser developer tools are invaluable here; monitoring the “Network” tab while media plays will typically reveal the direct link to the `.mp4`, `.webm`, `.mp3`, or other media files. Specialized media downloaders or browser extensions can then be used to save these files, always adhering to copyright laws and fair use principles.

- Document Files (PDFs, DOCs): Look for direct links to downloadable documents. These are usually straightforward to download by clicking the link or right-clicking and selecting “Save Link As.” Ensure all linked documents are accounted for and saved.

“Thorough content extraction is the digital equivalent of taking a precise inventory. Every piece of information, from a single text character to a high-resolution video, must be accounted for to ensure a complete and accurate recreation.”

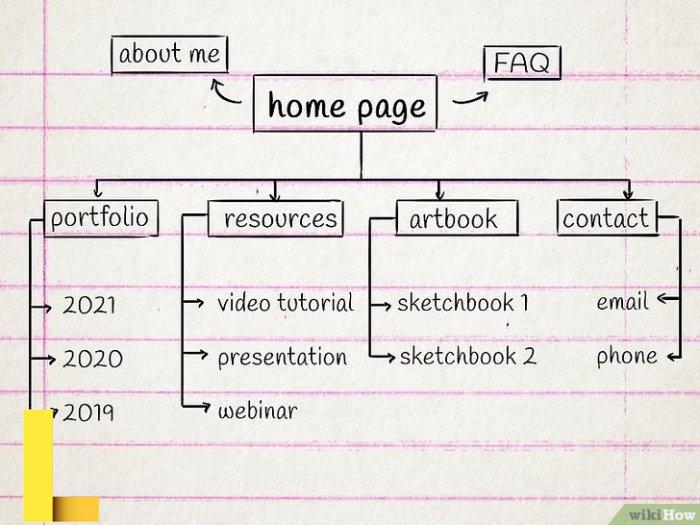

Identifying and Documenting Unique Pages and Their Hierarchical Relationships

Understanding the complete scope of a website means identifying every unique page and mapping out how they relate to each other. This hierarchical understanding forms the backbone of the new site’s architecture and ensures all content is properly categorized and accessible.Strategies for identifying and documenting all unique pages and their hierarchical relationships include:

- Manual Site Exploration: Begin by systematically navigating through every link on the website, starting from the homepage. Create a running list of all unique URLs encountered. This manual process helps in understanding the user journey and discovering pages not easily found through automated means.

- Utilizing Sitemaps: Check for an `sitemap.xml` file (e.g., `www.example.com/sitemap.xml`) or an `sitemap.html` page. These resources often provide a comprehensive list of all indexed pages, offering a quick overview of the site’s structure.

- Web Crawling Tools: Employ web crawling software such as Screaming Frog Spider, Xenu Link Sleuth, or similar tools. These applications can systematically crawl an entire website, identify all accessible URLs, and often generate a visual representation of the site’s internal linking structure, revealing parent-child relationships between pages.

- Browser History and Bookmarking: As you explore, utilize browser history or bookmarking features to keep track of visited pages. Organize bookmarks into folders that mirror the site’s hierarchy to create an initial mental or digital map.

- Documentation via Spreadsheets or Mind Maps: Create a structured document, typically a spreadsheet, to list each unique URL. Include columns for page title, description, parent page, and any identified child pages. For a visual representation, mind-mapping software can be highly effective in illustrating the hierarchical relationships and content clusters.

- Categorizing Page Types: As pages are identified, categorize them (e.g., Homepage, About Us, Service Page, Product Detail, Blog Post, Contact, Legal, etc.). This helps in understanding the different templates and content types that will need to be recreated.

To facilitate an efficient content gathering process, the following table Artikels common content types, effective extraction methods, and suitable tools or software:

| Content Type | Extraction Method | Tools/Software |

|---|---|---|

| Text Content | Manual copy-paste, “Save Page As,” web scraping, browser extensions. | Browser functions, Text editor, specialized web scrapers (e.g., Scrapy, BeautifulSoup for Python), “Print to PDF” feature. |

| Images (JPG, PNG, GIF) | Right-click “Save Image As,” bulk image downloaders, browser developer tools (Network/Elements tab). | Browser functions, Image Downloader extensions, httrack, Screaming Frog (for discovering image URLs). |

| Media Files (Videos, Audio) | Identify direct source URLs via developer tools, media downloaders. | Browser Developer Tools, dedicated video downloaders (e.g., JDownloader, yt-dlp for general media), specific browser extensions. |

| Document Files (PDF, DOCX) | Direct link download, “Save Link As.” | Browser functions. |

| Overall Site Structure/URLs | Manual navigation, sitemap analysis, web crawling. | Screaming Frog Spider, Xenu Link Sleuth, Google Search Console (if access is available), manual spreadsheet/mind map. |

Choosing the Right Tools and Technologies for Recreation

![How to Create a Website, Easily in [year]: The Ultimate Guide How to Create a Website, Easily in [year]: The Ultimate Guide](https://www.listmixer.com/wp-content/uploads/2025/10/webdesignmockup.jpg)

When embarking on a website recreation project, selecting the appropriate tools and technologies is a critical decision that significantly influences the project’s efficiency, scalability, and long-term maintainability. This choice should align with the original site’s complexity, the project’s specific requirements, and the available resources, ensuring the new site not only replicates functionality but also improves upon it where possible. A well-informed decision at this stage can streamline development and future updates.

Comparing Content Management Systems for Website Recreation, How to recreate a website

For many website recreation projects, especially those involving content-rich sites, Content Management Systems (CMS) offer a robust and user-friendly foundation. These platforms provide a structured environment for managing content, user roles, and site functionality without requiring extensive coding knowledge for everyday operations. Understanding the distinctions between popular CMS options like WordPress, Joomla, and Drupal is essential for making an informed choice for your recreation efforts.

-

WordPress

WordPress is renowned for its ease of use and extensive ecosystem of themes and plugins, making it an excellent choice for rapid development and sites with moderate complexity. Its flexibility allows for recreating a wide range of websites, from simple blogs to complex e-commerce platforms, thanks to plugins like WooCommerce.

For a recreation project, WordPress can significantly reduce development time if the original site’s features can be mapped to existing plugins or themes. However, its flexibility can sometimes lead to performance issues if not optimized properly, and heavy reliance on numerous plugins might introduce security vulnerabilities or compatibility challenges.

-

Joomla

Joomla offers a balance between ease of use and powerful features, often favored for more complex portal sites or community-driven platforms. It provides more built-in features for user management, multilingual content, and access control compared to a default WordPress installation, which can be advantageous when recreating sites with intricate user roles or diverse content structures.

While it has a steeper learning curve than WordPress, Joomla’s robust framework allows for significant customization, making it suitable for recreating sites that require a solid structure for varied content types and user interactions. Its extension directory, though smaller than WordPress’, is well-curated.

-

Drupal

Drupal is often chosen for highly complex, data-intensive, and enterprise-level websites due to its powerful architecture, advanced content modeling capabilities, and strong security features. Its flexibility is unparalleled among CMS platforms, allowing developers to build almost any type of site with custom content types, views, and workflows. For recreation projects involving highly structured data, intricate user permissions, or high traffic demands, Drupal provides a scalable and secure foundation.

However, it has the steepest learning curve, requiring significant development expertise, which can translate to higher development costs and longer timelines. Its strengths lie in its API-first approach and enterprise-grade capabilities, making it ideal for recreating sites that demand ultimate control and customization.

The choice among WordPress, Joomla, and Drupal for website recreation hinges on a careful evaluation of the original site’s complexity, the required flexibility for content types and user roles, and the available development expertise.

Embarking on a website recreation project demands meticulous attention to detail, akin to planning any significant undertaking. Just as one might prioritize quality and reliability when arranging outdoor events, perhaps by exploring excellent picnic tent rentals , ensuring a flawless user experience is paramount. This comprehensive approach then significantly aids in rebuilding your digital platform effectively and efficiently.

Static Site Generators versus Dynamic Frameworks

The decision between using a static site generator (SSG) and a dynamic framework is fundamental, dictating how content is rendered and served. This choice largely depends on the original site’s characteristics, particularly its interactivity, content update frequency, and overall scale.A static site generator processes content and templates at build time, producing plain HTML, CSS, and JavaScript files. These files are then served directly to users.

This approach is ideal for sites where content changes infrequently, such as blogs, portfolios, documentation sites, or marketing pages. Examples include Jekyll, Hugo, and Gatsby. For recreation projects, an SSG is beneficial if the original site primarily served static content, prioritized performance, or aimed for enhanced security due to the absence of a server-side database. They offer superior speed, lower hosting costs, and reduced security risks compared to dynamic sites.

Dynamic frameworks, on the other hand, generate content on the fly with each user request. They typically involve a server-side language (like Python, PHP, Node.js), a database, and a web server. This setup is essential for websites with frequently changing content, user interactions, personalized experiences, or complex business logic, such as e-commerce platforms, social networks, or web applications. Examples include Django (Python), Laravel (PHP), and Express.js (Node.js).

When recreating a site with extensive user-generated content, real-time data updates, or complex back-end processes, a dynamic framework is indispensable. While offering immense flexibility and functionality, they require more server resources and present a larger attack surface compared to static sites.

Selecting Front-end and Back-end Technologies

The selection of front-end and back-end technologies should be guided by the original site’s architecture, its interactivity level, and the complexity of its data management. A thoughtful combination ensures the recreated site is performant, scalable, and maintainable.

Front-end Technologies

Front-end technologies govern the user interface and user experience, handling everything the user sees and interacts with. Modern JavaScript frameworks are popular for building dynamic and responsive user interfaces.

-

React

Developed by Facebook, React is a JavaScript library for building user interfaces, particularly single-page applications where data changes over time. It excels in creating interactive and complex UIs due to its component-based architecture and virtual DOM, which optimizes rendering performance. For recreating sites with rich, interactive user experiences, dashboards, or complex forms, React is a strong contender.

Its widespread adoption means a vast ecosystem and community support.

-

Vue.js

Vue.js is a progressive framework, meaning it can be adopted incrementally. It is known for its approachability, clear documentation, and excellent performance, often considered easier to learn than React or Angular. Vue is suitable for recreating sites that require a flexible, lightweight, and performant front-end, from simple interactive components to full-fledged single-page applications.

Its reactivity system makes data binding straightforward, which is beneficial for dynamic content updates.

-

Angular

Maintained by Google, Angular is a comprehensive framework for building enterprise-grade web applications. It offers a structured approach with built-in features for routing, state management, and testing. Angular is particularly well-suited for recreating large, complex applications that benefit from a highly opinionated framework, such as internal tools, large-scale data management systems, or applications requiring strict architectural patterns.

Its learning curve is steeper, but it provides a complete development experience.

Back-end Technologies

Back-end technologies handle server-side logic, database interactions, and API management, forming the core infrastructure that powers dynamic websites.

-

Node.js (with Express.js)

Node.js allows JavaScript to be used on the server side, enabling full-stack JavaScript development. Paired with frameworks like Express.js, it’s highly efficient for building scalable network applications, real-time services, and APIs. For recreating sites that require high concurrency, real-time features (e.g., chat applications, live dashboards), or an existing JavaScript skillset, Node.js is an excellent choice.

Its non-blocking I/O model is advantageous for performance.

-

Python (with Django/Flask)

Python, a versatile language, is widely used for web development, data science, and AI. Frameworks like Django provide a “batteries-included” approach, offering an ORM, admin panel, and robust security features out of the box, making it ideal for rapid development of complex, database-driven applications. Flask, a micro-framework, offers more flexibility for smaller projects or custom architectures.

Python is suitable for recreating sites with complex business logic, data processing requirements, or those that might integrate machine learning capabilities.

-

PHP (with Laravel/Symfony)

PHP remains a dominant force in web development, powering a significant portion of the internet. Frameworks like Laravel offer an elegant syntax, robust features, and a thriving ecosystem, simplifying common web development tasks like routing, authentication, and caching. Symfony, another powerful PHP framework, is known for its modularity and performance, often used for large-scale enterprise applications.

PHP frameworks are excellent for recreating a wide range of websites, from e-commerce platforms to content portals, especially if the original site was built with PHP or if there’s existing expertise in the language.

Summary of Technology Choices for Recreation

The following table summarizes key technology categories, popular options, and their respective pros and cons specifically in the context of website recreation projects. This overview can assist in quickly evaluating potential tools against project requirements.

| Technology Category | Popular Options | Pros for Recreation | Cons for Recreation |

|---|---|---|---|

| Content Management Systems (CMS) | WordPress, Joomla, Drupal |

|

|

| Site Generation Method | Static Site Generators (e.g., Hugo, Jekyll), Dynamic Frameworks (e.g., Django, Laravel) |

|

|

| Front-end Frameworks | React, Vue.js, Angular |

|

|

| Back-end Technologies | Node.js (Express.js), Python (Django/Flask), PHP (Laravel/Symfony) |

|

|

Development and Content Integration

After thoroughly understanding the existing website’s structure and selecting the appropriate tools, the next crucial phase involves the actual development and the careful integration of all extracted content. This stage transforms the blueprint into a functional, live website, requiring meticulous planning and execution to ensure a seamless transition and a robust final product.This section details the sequential workflow from setting up the development environment to coding the various parts of the website, followed by strategies for efficiently integrating content.

It also emphasizes methods to maintain data integrity and consistency throughout the migration process, concluding with essential checks to validate the successful integration.

Sequential Development Workflow

The development of a new website, especially when recreating an existing one, benefits greatly from a structured, sequential workflow. This approach ensures that foundational elements are in place before building upon them, minimizing potential issues and streamlining the entire process.

-

Environment Setup: Establishing a robust development environment is the initial step. This typically involves setting up a local server (e.g., Apache, Nginx) with a runtime environment (e.g., PHP, Node.js, Python) and a database system (e.g., MySQL, PostgreSQL). Version control, such as Git, is indispensable for tracking changes, collaborating with teams, and reverting to previous states if necessary. Integrated Development Environments (IDEs) like VS Code or Sublime Text are configured with relevant extensions to enhance productivity.

-

Database Design and Implementation: Based on the analysis of the existing website’s content and data structure, a new database schema is designed. This involves defining tables, columns, data types, relationships, and primary/foreign keys. Normalization principles are applied to reduce data redundancy and improve data integrity. Once designed, the database is implemented, and initial migrations are run to create the schema in the chosen database system.

-

Back-End Development: The back-end serves as the server-side logic and data management layer. This phase involves developing Application Programming Interfaces (APIs) to handle requests from the front-end, process business logic, interact with the database, and manage user authentication and authorization. Frameworks like Laravel (PHP), Django (Python), Ruby on Rails (Ruby), or Express.js (Node.js) are commonly used to accelerate development and provide structured patterns for building robust applications.

This includes implementing data models, controllers, and routing to expose data and functionality.

-

Front-End Development: The front-end is the user-facing part of the website, responsible for presenting content and interacting with users. This stage involves building the user interface (UI) components using HTML for structure, CSS for styling, and JavaScript for interactivity. Modern front-end frameworks like React, Angular, or Vue.js are often employed to create dynamic, responsive, and maintainable user experiences. The front-end consumes data and functionality provided by the back-end APIs, displaying it in a visually appealing and intuitive manner, ensuring cross-browser compatibility and responsiveness across various devices.

Efficient Content Integration Procedures

Integrating the extracted content into the newly developed website structure requires careful planning to ensure accuracy and efficiency. The approach chosen often depends on the volume and complexity of the content.

For smaller websites with limited content, manual content migration can be feasible. This involves directly copying and pasting text, images, and other media into the new website’s content management system (CMS) or directly into the new HTML templates. While time-consuming, it offers precise control over formatting and ensures each piece of content is reviewed.

For larger websites or those with structured data, automated or semi-automated methods are significantly more efficient. This often involves scripting data migration. For example, a Python or PHP script can read data from exported CSV files, JSON files, or even directly from the old database, and then insert it into the new database via the new website’s API or direct database interaction.

This method requires careful mapping of old data fields to new ones and robust error handling.

If the original website used a CMS, and the new website also uses a CMS (even a different one), there might be specific import/export tools available. For instance, migrating blog posts from an old WordPress site to a new one might involve using WordPress’s native export/import functionality, or a specialized plugin that handles content, users, and media. Similarly, migrating e-commerce product data from one platform to another often leverages CSV imports, which require precise column mapping to ensure product details, pricing, and inventory are correctly transferred.

Data Integrity and Consistency Strategies

Maintaining data integrity and consistency during content migration is paramount to the success of the website recreation project. Any errors in this phase can lead to broken links, incorrect information, or a degraded user experience.

“Data integrity is the assurance that data is consistent, correct, and accurate throughout its entire lifecycle. Data consistency ensures that the data in a database is valid according to predefined rules and relationships.”

One primary strategy involves thorough data validation and sanitization. Before any content is inserted into the new database, it must be validated against the new schema’s rules (e.g., data types, length constraints, required fields). Sanitization processes are crucial to remove any malicious code (like XSS scripts) or unwanted characters that might have been present in the old content, protecting the new website from security vulnerabilities.

This also includes ensuring that all special characters and encodings (like UTF-8) are handled correctly to prevent display issues.

Another key strategy is meticulous content mapping. Each field from the old content structure must be accurately mapped to its corresponding field in the new database. This often requires creating a detailed mapping document or script that defines how each piece of information should be transformed or placed. For example, if an old ‘post_content’ field needs to be split into ‘introduction’ and ‘body’ fields in the new structure, the migration script must handle this transformation logically.

For images and other media, ensuring that file paths are correctly updated and that files are physically moved to the new server’s designated media directories is vital.

Implementing robust error logging during the migration process is also critical. Any content that fails to migrate due to validation errors, missing fields, or database constraints should be logged, allowing developers to review and manually correct these exceptions. Regular backups of both the source content and the new database before, during, and after migration provide a safety net, allowing for quick recovery in case of unforeseen issues.

Finally, performing test migrations on a staging environment before the final production migration helps identify and resolve potential issues in a controlled setting, ensuring a smoother transition.

Post-Integration Essential Checks

After the initial content integration, a series of comprehensive checks are necessary to verify that all content has been transferred correctly and functions as expected. This validation phase is crucial for identifying and rectifying any issues before the website is fully launched or made public.

The following bulleted list Artikels essential checks to perform, ensuring the recreated website is robust, accurate, and provides an optimal user experience:

-

Broken Links and Redirects: Verify all internal and external links are functional. Implement 301 redirects for any old URLs that have changed to preserve value and user experience, checking these redirects thoroughly.

-

Missing Images and Media: Confirm that all images, videos, and other media files are loading correctly and are displayed at their intended sizes and aspect ratios. Check for any broken image placeholders.

-

Content Formatting and Layout: Review pages and posts for correct typography, paragraph breaks, lists, tables, and overall layout. Ensure consistency with the new design system and check for any unexpected styling issues.

-

Metadata: Validate that page titles, meta descriptions, alt tags for images, and other -related attributes have been correctly migrated or updated according to the new website’s strategy.

-

Interactive Elements Functionality: Test all interactive elements such as forms, search bars, navigation menus, and call-to-action buttons to ensure they are fully functional and submit data correctly.

-

Cross-Browser and Device Responsiveness: Check the website’s appearance and functionality across different web browsers (Chrome, Firefox, Safari, Edge) and various devices (desktops, tablets, smartphones) to ensure a consistent and responsive user experience.

-

Performance Optimization: Monitor page load times and overall website performance. Address any identified bottlenecks, such as unoptimized images or inefficient scripts, to ensure a fast and smooth browsing experience.

-

User Accounts and Permissions: If applicable, verify that user accounts, roles, and permissions have been migrated accurately and that users can log in and access the correct content and features.

-

Data Accuracy: Conduct spot checks on critical data points, such as product prices, inventory levels, contact information, and dates, to confirm that the migrated data is accurate and matches the source.

-

Security Vulnerabilities: Perform basic security checks to ensure no obvious vulnerabilities were introduced during migration, such as exposed API keys or unencrypted sensitive data.

Testing, Deployment, and Post-Launch Considerations

After meticulously structuring and integrating content into your recreated website, the critical next steps involve rigorous testing, a smooth deployment process, and establishing robust post-launch protocols. These phases are paramount to ensure the website functions flawlessly, provides an optimal user experience, and remains stable and secure in its live environment, thereby preserving the integrity and performance of the original site’s recreation.

Comprehensive Website Testing Methodologies

Before any website goes live, a thorough testing phase is indispensable to identify and rectify potential issues. This process ensures that the recreated website not only looks correct but also operates as intended, is easy for users to navigate, and performs efficiently under various conditions. Employing diverse testing methodologies covers a broad spectrum of potential problems, from broken links to slow loading times.

-

Functional Testing: This type of testing verifies that every feature and function of the website operates according to its specifications. It involves checking all links, forms, buttons, databases, and any interactive elements to ensure they perform their intended actions correctly. For instance, if a contact form is recreated, functional testing confirms that submitting the form successfully sends an email and stores the data as expected.

This phase often involves creating test cases for each specific function to validate its behavior.

-

Usability Testing: Focusing on the user experience, usability testing assesses how easy and intuitive the website is to navigate and interact with. This involves real users (or representatives of the target audience) performing typical tasks on the site, such as finding specific information, completing a purchase, or signing up for a newsletter. Observations from these sessions help identify confusing layouts, unclear instructions, or inefficient workflows, ensuring the recreated site is as user-friendly as, or even better than, the original.

Tools like heatmaps and session recordings can also provide valuable insights into user behavior.

When approaching how to recreate a website, meticulous planning is paramount. Much like establishing a welcoming outdoor area with robust in ground picnic tables for public enjoyment, the digital rebuild demands attention to user experience and infrastructure. Ensuring every element is optimized is key to successfully recreating a website.

-

Performance Testing: Performance testing evaluates the website’s responsiveness, speed, and stability under various load conditions. This includes measuring page load times, server response times, and the site’s ability to handle multiple concurrent users without degrading performance. Techniques like stress testing push the website beyond its normal operational limits to see where it breaks, while load testing simulates expected user traffic.

This is crucial for ensuring the recreated site can handle anticipated traffic volumes, especially for e-commerce sites or platforms with dynamic content. For example, if the original site handled 1,000 concurrent users with a 2-second load time, the recreated site must meet or exceed this benchmark.

Website Deployment Checklist

The deployment phase transitions the website from a development or staging environment to the live production server. A structured checklist is vital to ensure all necessary configurations are in place, minimizing the risk of errors or downtime during the launch. This systematic approach guarantees that the recreated website is securely and efficiently made available to its audience.

-

Server Setup and Configuration: Confirm the hosting environment meets all technical requirements (e.g., PHP version, database type, memory limits). Ensure web server software (Apache, Nginx) is correctly configured, including virtual hosts, SSL certificates, and necessary modules. Verify file permissions are set appropriately to prevent unauthorized access or modification.

-

Database Migration: Export the final, tested database from the staging environment and import it into the production database server. Update all database connection strings in the website’s configuration files to point to the new production database.

-

File Transfer: Upload all website files (HTML, CSS, JavaScript, images, backend code) to the production server. Utilize secure file transfer protocols like SFTP or SCP to protect data integrity during transit. Verify that all files are in their correct directories.

-

Domain Configuration: Update DNS records (A records, CNAME records) to point the domain name to the IP address of the new production server. Allow sufficient time for DNS propagation across the internet, which can take several hours.

-

Security Measures Implementation: Activate the SSL/TLS certificate for HTTPS to encrypt data transmission. Configure firewalls, implement intrusion detection systems, and ensure all security patches are applied to the server and website software. Remove any development-specific debugging tools or sensitive configuration files that are not needed in production.

-

Backup Strategy Confirmation: Before going live, perform a full backup of the entire production server and database. Confirm that automated backup routines are configured and functioning correctly for ongoing data protection.

-

CDN Integration (if applicable): If a Content Delivery Network (CDN) is being used, ensure it is properly configured and caching website assets effectively to improve load times for global users.

-

Search Engine Optimization () Checks: Verify that robots.txt is correctly configured to allow search engine crawling (unless specific pages need to be excluded) and that sitemaps are generated and submitted to search engines. Ensure meta tags and titles are optimized.

-

Analytics Setup: Confirm that web analytics tools (e.g., Google Analytics) are correctly installed and tracking website traffic and user behavior from the moment of launch.

-

Error Pages Configuration: Ensure custom error pages (404 Not Found, 500 Internal Server Error) are configured and provide helpful information to users, maintaining brand consistency.

Essential Post-Launch Activities

Launching a website is not the final step; it marks the beginning of continuous monitoring, maintenance, and optimization. Post-launch activities are crucial for ensuring the website remains stable, secure, performs optimally, and continues to meet user needs and business objectives over time. Neglecting these aspects can lead to security vulnerabilities, performance degradation, and a poor user experience.

-

Monitoring: Continuously monitor website performance, uptime, and security. Utilize tools for real-time tracking of server resources (CPU, memory), network traffic, and application errors. Set up alerts for critical issues such as downtime, slow response times, or unusual activity that might indicate a security breach. Regular checks of error logs are also essential for identifying and addressing issues proactively.

For instance, monitoring tools like New Relic or Datadog can provide insights into application performance and server health, alerting administrators if a critical threshold is exceeded.

-

Backups: Implement a robust and automated backup strategy for both the website files and its database. Ensure backups are stored off-site or in a separate cloud storage location for disaster recovery purposes. Regularly test the backup restoration process to confirm data integrity and the ability to quickly recover the website in case of data loss or corruption. A common practice involves daily incremental backups with weekly full backups, retained for a specified period.

To effectively recreate a website, thorough planning is crucial, considering everything from user journeys to content relevance. This parallels how parents diligently explore options like arlington va parks and recreation summer camps for their children. Ultimately, understanding your target audience is paramount for any successful website overhaul.

-

Ongoing Maintenance: This involves regular updates to the website’s core software, themes, and plugins to patch security vulnerabilities and benefit from new features. Content updates are also part of ongoing maintenance, ensuring information remains current and relevant. Periodically review and optimize website code, database queries, and server configurations to maintain optimal performance. Security audits should be conducted regularly to identify and mitigate potential threats.

For example, if using a CMS like WordPress, regular updates for the core system and all installed plugins are vital to prevent exploits.

-

Security Audits and Patches: Conduct regular security audits to identify vulnerabilities. Stay informed about the latest security threats and apply patches to the server operating system, web server software, and website application as soon as they become available. Implement Web Application Firewalls (WAFs) and regularly review access logs for suspicious activity.

-

Performance Optimization: Continuously analyze website performance metrics and implement optimizations as needed. This could include image optimization, caching strategies, code minification, and database indexing to ensure the website remains fast and responsive as content grows and traffic changes.

“A well-tested website is a reliable website, and a reliably deployed website with consistent post-launch care is a sustainable one. These stages are not just checkboxes but commitments to digital excellence.”

The following table provides a structured overview of the key testing phases, their objectives, and recommended tools or methods, offering a practical guide for ensuring the quality of your recreated website.

| Testing Phase | Key Objectives | Recommended Tools/Methods |

|---|---|---|

| Functional Testing | Verify all features and functionalities work as specified; ensure forms, links, and interactive elements are operational. | Manual testing, Automated testing frameworks (Selenium, Cypress), Unit testing (Jest, PHPUnit), Integration testing. |

| Usability Testing | Assess user-friendliness, navigation, and overall user experience; identify areas of confusion or friction. | User interviews, A/B testing, Heatmaps (Hotjar), Session recordings, User acceptance testing (UAT). |

| Performance Testing | Evaluate website speed, responsiveness, and stability under various load conditions; ensure optimal load times. | Load testing tools (JMeter, LoadRunner), Page speed analysis (Google PageSpeed Insights, GTmetrix), Browser developer tools. |

| Security Testing | Identify vulnerabilities, protect against attacks (XSS, SQL Injection), ensure data privacy and integrity. | Penetration testing, Vulnerability scanners (OWASP ZAP, Nessus), Security audits, Code reviews. |

| Compatibility Testing | Ensure consistent appearance and functionality across different browsers, devices, and operating systems. | Cross-browser testing tools (BrowserStack, Sauce Labs), Device emulators, Responsive design testing. |

The deployment pipeline illustrates the structured journey of the recreated website from its initial development stages to its final live production environment. This methodical progression ensures that changes are thoroughly tested and validated at each step, minimizing risks and maintaining stability.

Deployment Pipeline Diagram Description:

The diagram begins with the Development Environment, which represents the local machines where developers write and test code. This is the initial stage where features are built and integrated. Developers commit their code changes to a version control system, typically Git, which serves as the central repository for all project code.

From the version control system, code is then automatically or manually pushed to the Staging Environment. This environment is designed to mirror the production environment as closely as possible in terms of server configuration, database, and content. Its primary purpose is to allow for comprehensive integration testing and content review without impacting the live site. Stakeholders and content creators often review the site here.

Following successful validation in staging, the website progresses to the Testing Environment. While often integrated with staging, a dedicated testing environment can provide a sandbox for specific types of testing, such as performance, security, and extensive user acceptance testing (UAT) with a larger group of internal or external testers. Automated test suites are run here to ensure no regressions have been introduced.

Once all tests are passed and the website is deemed ready for public access, it is then deployed to the Live Production Server. This is the environment where the website is publicly accessible to end-users. The deployment process to production is often automated to reduce human error and ensure a consistent, reliable release. This final stage is continuously monitored for performance, security, and availability to ensure a seamless user experience.

Wrap-Up

Ultimately, recreating a website is more than just rebuilding; it is an opportunity for significant improvement and innovation. By methodically addressing each stage—from initial analysis and strategic tool selection to diligent development, comprehensive testing, and thoughtful deployment—organizations can achieve a superior online presence that is both functional and future-proof. This detailed approach ensures that the new website not only mirrors the original’s strengths but also significantly enhances user experience and operational efficiency, setting a strong foundation for future digital success.

Key Questions Answered

Why recreate a website instead of just updating it?

Recreation is often chosen when the existing site has fundamental architectural flaws, outdated technology, or a need for a complete overhaul to meet new business goals, which simple updates cannot address.

How long does the website recreation process typically take?

The timeline varies significantly based on complexity, content volume, and team size, ranging from a few weeks for simpler sites to several months for large, intricate platforms.

Are there any legal considerations for content when recreating a website?

Yes, it’s crucial to ensure you have the rights or licenses for all content, images, and media being carried over or newly created to avoid copyright infringement and other legal issues.

Will recreating my website negatively impact its search engine optimization ()?

If managed correctly with proper redirects, content migration, and technical best practices, a recreation can actually improve by enhancing site performance, user experience, and mobile responsiveness.